- #COMMUNITY PENTAHO DATA INTEGRATION HOW TO#

- #COMMUNITY PENTAHO DATA INTEGRATION UPDATE#

- #COMMUNITY PENTAHO DATA INTEGRATION CODE#

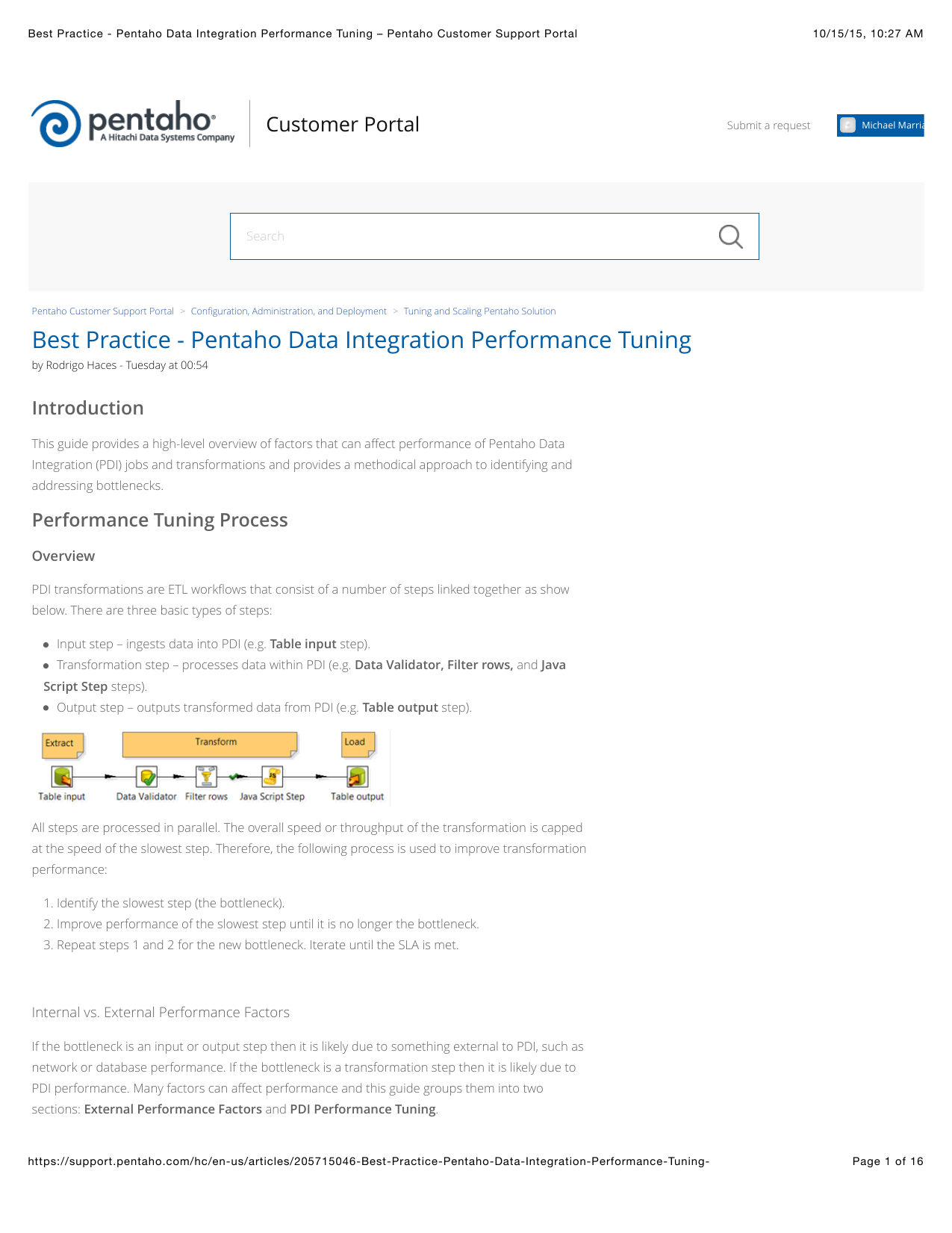

It run tasks, which are sets of activities, via operators, which are templates for tasks that can by Python functions or external scripts. Transformations can be defined in SQL, Python, Java, or via graphical user interface.Ĭonnectors: Data sources and destinationsĮach of these tools supports a variety of data sources and destinations.Īirflow orchestrates workflows to extract, transform, load, and store data. Stitch is part of Talend, which also provides tools for transforming data either within the data warehouse or via external processing engines such as Spark and MapReduce. Within the pipeline, Stitch does only transformations that are required for compatibility with the destination, such as translating data types or denesting data when relevant. In addition, users can drag and drop custom scripts in Python, Java, JavaScript, and SQL onto the canvas. Pentaho supports a wide variety of pre- and post-load transformations through dragging and dropping more than two dozen kinds of operations onto its work area.

#COMMUNITY PENTAHO DATA INTEGRATION CODE#

Developers can write Python code to transform data as an action in a workflow. Airflow manages execution dependencies among jobs (known as operators in Airflow parlance) in the DAG, and programmatically handles job failures, retries, and alerting.

A DAG is a topological representation of the way data flows within a system. Import API, Stitch Connect API for integrating Stitch with other platforms,Īpache Airflow is a powerful tool for authoring, scheduling, and monitoring workflows as directed acyclic graphs (DAG) of tasks. Also available from the AWS store.Ĭompliance, governance, and security certificationsĪnnual contracts. Options for self-service or talking with sales. Objects and arrays allow you to have nested data structures.Business intelligence, data integration, ETLįull table incremental via binary logs or SELECT/replication keysįull table incremental via change data capture or SELECT/replication keysĪbility for customers to add new data sources Subscribe to: Post Comments (Atom) Talend's Forum is the preferred location for all Talend users and community members to share information and experiences, ask questions, and get support. Important step, map input data to output model. Enter your JSON or JSONLines data below and Press the Convert button. JSON is a formatted string used to exchange data. ETL Talend with Big Data, Skill:ETL Talend Maryland : Job Requirements : Title: ETL Talend with Big Data Location: Owings Mills MD || Remote for Now Type: Long term contract / C2C Required skill- Talend & Talend Bigdata Job Description: Primary Skills - Talend ETL concepts Oracle S3 Snowflake Hadoop data bases and different file systems (JSON XML) - Analyze design implement and maintain high Talend's Forum is the preferred location for all Talend users and community members to share information and experiences, ask questions, and get support. for moving data from S3 to mysql you can use below options 1) using talend aws components awsget you can get the file from S3 to your talend server or your machine where talend job is running and then you can read this. The JSON_VALUE function will return an error, if the supplied JSON string is not a valid JSON. And Talend’s built-in visual data mapper lets you easily manipulate complex data formats.

#COMMUNITY PENTAHO DATA INTEGRATION HOW TO#

Below is a complete list of tutorials, webinars, videos, and blog posts to help you learn how to get the most value out of Open Studio: Tutorials and Demos. One of the biggest strengths of XML is XPath, the query-oriented language to query subsections of an XML document. When you combine Talend and Melissa, you can read and write to relational databases, fixed or deliminated text files, XML, JSON, COBOL, and other file formats including Avro ® and Parquet ®, or Hadoop-based NoSQL stores such as HBase ® and Hive ®. You can follow the procedure below to establish a JDBC connection to SharePoint: Add a new database connection to SharePoint data: To add a new connection, expand the Metadata node, right-click the Db Connections node, and then click Create Connection.

#COMMUNITY PENTAHO DATA INTEGRATION UPDATE#

You can use standard database APIs to insert or update JSON data in Oracle Database.

However it is worth mentioning that the next step after the component gets to a decent shape is to measure its performance which is an To set this up you first need to create a connection. In the resulting wizard, enter a name for the Importing data from a JSON file. Instead of keeping here the whole history I decided to keep this old post updated with just the latest issue found in Talend tFileInputJSON component.